Should AI be used in policing? Two-fifths of the public say it would be no help – whilst a third do

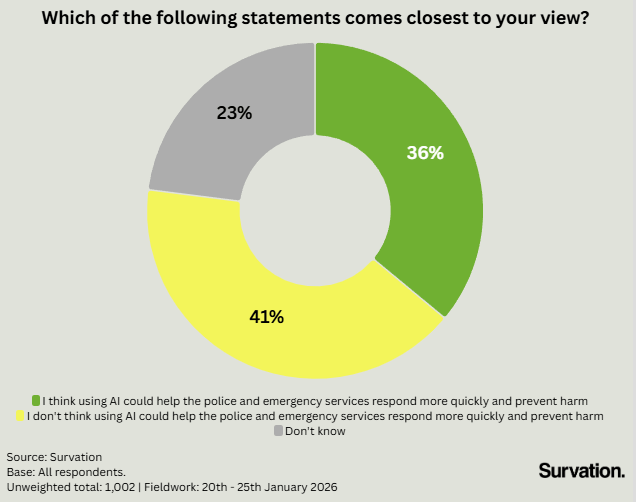

With the use of AI having increased across the workplace and education sectors, now police forces around the country are considering its further deployment. However, Survation found that a plurality of the UK population do not believe AI can help police and emergency services respond more quickly and prevent harm (41%), and just more than a fifth (23%) do not know if it would help or not. In total, almost two-thirds of the population (64%) either do not think it would help or are simply unsure whether it can.

This suggests AI use in emergency services is still at an early stage, and public confidence will depend on clear evidence of the benefits and robust safeguards.

Among the 36% of the population who believe AI could improve emergency response and harm prevention, support is not evenly distributed. For example, men are +7 points more likely than women to hold this view, with 40% of the former viewing AI as beneficial compared to 33% of the latter. Age also appears to be a decisive factor: 46% of those aged 25-44 believe AI could help policing, compared with just 27% of those over 65.

Interestingly, people with no or level 1 qualifications are also less likely to believe AI can help policing (30%) compared to those with level 4+ qualifications (45%). With younger people and those with higher levels of qualifications feeling notably more positive towards AI in policing – groups we could reasonably expect to use AI the most in their personal and professional lives – the data suggests that greater exposure to AI technologies makes one more amenable to their deployment elsewhere.

Public Scepticism and Real‑World Consequences

Recent events suggest that AI use within emergency services remains in its infancy. Earlier this year, West Midlands Chief Constable Craig Guildford apologised to MPs for giving incorrect evidence produced by Microsoft Copilot referencing a football match which never took place to justify the ban on Maccabi Tel Aviv fans attending an Aston Villa Europa League match in November. The incident highlights the risks of over-reliance on AI tools and underscores why public confidence is low.

In light of operational missteps and the public uncertainty identified by Survation, it appears AI use in UK policing remains at an early stage. Greater public support will depend not only on technical refinement, but on clear oversight, appropriate training, and transparent public engagement to build trust.

—

GET THE DATA.

Survation conducted an online poll of 1,002 adults aged 18+ in the UK on their attitudes to AI in policing. Fieldwork was conducted between 20th–25th January 2026. Tables are available here.

________________________________________

Survation. is an MRS company partner, a member of the British Polling Council and abides by their rules. To find out more about Survation’s services, and how you can conduct a telephone or online poll for your research needs, please visit our services page.

If you are interested in commissioning research or to learn more about Survation’s research capabilities, please contact John Gibb on 020 3818 9661, email researchteam@survation.com, or visit our services page.

For press enquiries, please call 0203 818 9661 or email media@survation.com

< Back